Route every model call through one LLM API gateway

Router One is an OpenAI-compatible LLM API gateway for GPT, Claude, Gemini, and DeepSeek calls from apps, Claude Code, and Codex, with model routing, provider fallback, cost traces, and China-friendly billing.

- One endpoint for apps, Claude Code, Codex CLI, and OpenAI-compatible SDKs

- Model routing and provider fallback by health, latency, and cost policy

- Wallet top-ups via WeChat Pay, Alipay, Stripe, and USDT/USDC

After a request reaches Router One

Route, bill, and trace one call

Route result

Healthy provider first

Auto-switch on failure

Cost trace

$0.0034 · 1,248 tokens

Visible per request

Wallet balance

WeChat / Alipay / USDT

Pay as you go

- OpenAI-compatible API

- No prompt or completion storage

- Per-request cost & latency traces

- Automatic same-family fallback

- Flexible pay-as-you-go billing

Use Claude Code and Codex through one gateway

Point coding agents at Router One's OpenAI-compatible and Anthropic-compatible endpoints to centralize routing, fallback, billing, and traces.

Fewer rate-limit stalls

Independent API keys and gateway policy reduce single-provider rate-limit impact

Cost controls

Route by provider cost policy with precise per-token billing

Request-level visibility

Real-time visibility into token usage and cost per request

# Claude Code → Route through Router One

ANTHROPIC_BASE_URL="https://api.router.one"

ANTHROPIC_API_KEY="sk-xxx"

ANTHROPIC_AUTH_TOKEN="sk-xxx"OpenAI-compatible routing, tracing, and billing in one API layer

Keep model calls, provider fallback, observability, and payments in one layer while your app talks to a single OpenAI-compatible API.

Unified entry point

Apps, Claude Code, Codex CLI, and OpenAI-compatible SDKs can share one endpoint instead of multiple keys and bills.

Routing and fallback

Choose providers by health, latency, and cost policy; switch automatically when an upstream path fails.

Request observability

Trace model, tokens, cost, latency, and route result for every call so teams can debug slow or expensive requests.

Wallet and budgets

Wallet top-ups are live via WeChat Pay, Alipay, Stripe, and USDT/USDC, giving teams a practical spending control layer.

LLM model routing and provider fallback

Route GPT, Claude, Gemini, and DeepSeek calls from apps, Claude Code, and Codex by health, latency, and cost policy.

Auto Fallback

If a provider degrades or returns errors, traffic reroutes to another in the same model family. The fallback chain is visible in your request trace.

Cost Optimization

Prefer lower-cost providers for eligible same-model routes while keeping latency, health, and quality weights in view. Reduce spend without changing app code.

Latency-Aware

Latency-first routing prioritizes endpoints with stronger recent latency measurements.

Quality Scoring

Assign quality weights to balance between cost, speed, and output quality — per project, per model.

How routing decides

Each request scores candidate providers on recent latency, posted per-model cost, and rolling error rates. Router One invokes the selected provider first, then records retry and fallback decisions in the request trace when a timeout or upstream error occurs.

Billing and payments are clear from day one

Router One does not ask you to integrate first and decode the bill later. Wallet pay-as-you-go billing, per-request cost records, and multiple top-up channels are built into the gateway.

View pricing methodologyWallet pay-as-you-go

Top up first, then pay for actual token usage. Subscription plans are live, and wallet balances remain available.

WeChat Pay and Alipay

Top up in RMB for GPT, Claude, Gemini, and DeepSeek calls without a US credit card.

USDT / USDC

Stablecoin top-ups support cross-border teams, Web3 products, and card-limited workflows.

Per-request cost traces

Every call shows model, tokens, cost, and routing result so teams can investigate unexpected spend.

Built for stablecoin-funded AI teams

Web3 teams top up with USDT or USDC, issue separate API keys for agents and bots, set hard budget caps, and trace every model call by token, latency, and cost.

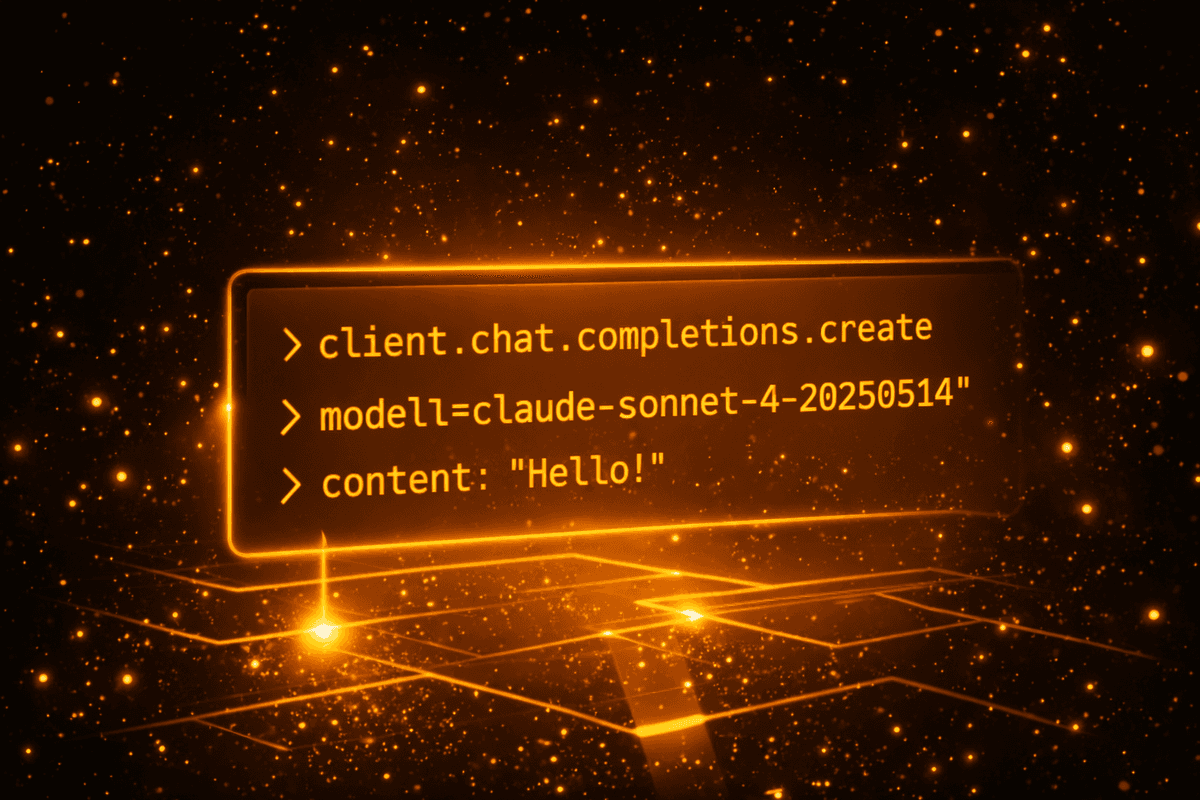

A Few Lines of Code to Get Started

Whether calling the aggregated API or configuring AI coding tools — it only takes a few lines.

from openai import OpenAI

client = OpenAI(

base_url="https://api.router.one/v1",

api_key="sk-xxx"

)

response = client.chat.completions.create(

model="claude-sonnet-4-20250514",

messages=[{"role": "user", "content": "Hello!"}]

)

# Works with any OpenAI-compatible SDKExplore Router One

Detailed guides and landing pages for the specific problems we solve.

Common questions

Quick answers on compatibility, China access, payment, and data handling.

- Is Router One OpenAI-compatible? Will my existing code work?

- Yes. Router One exposes an OpenAI-compatible endpoint at https://api.router.one/v1 and an Anthropic-compatible endpoint at https://api.router.one. Most apps, SDKs, Claude Code, and Codex CLI work with a single base-URL change — no rewrite.

- Can I use Router One from mainland China without a VPN?

- Yes. Router One is reachable from mainland China without a VPN or proxy. The published China latency benchmark measured 110-130ms p50 across Beijing, Shanghai, and Shenzhen; individual networks may vary.

- How do I pay without a foreign credit card?

- Top up your wallet in RMB with WeChat Pay or Alipay, pay by card through Stripe, or use USDT/USDC stablecoins on six chains. No foreign credit card is required, and subscription plans are also available.

- Which models can I call through one endpoint?

- One unified endpoint reaches 25+ supported models, including GPT, Claude, Gemini, DeepSeek, Mistral, and Llama. Browse the full catalog with per-token pricing on the models page.

- How do smart routing and automatic fallback work?

- Router One scores providers on EWMA latency, posted cost, and recent error rate, then routes by the weights you set per project. If a route degrades, traffic fails over to a healthy same-family provider automatically, and the decision is recorded in your per-request trace.

- Does Router One store my prompts or completions?

- No. Requests are proxied in real time and prompt and completion bodies are not retained. Router One logs only metadata — model, tokens, latency, cost — for billing and observability.

Looking for more detail? See the full FAQ

Route your next model call through Router One

Start with one OpenAI-compatible endpoint, then turn on smart routing, fallback, request traces, and wallet budget control. Integrate in minutes and pay for actual usage.